Use the Workbench CLI from a container

Categories:

Prior reading: Command-line interface overview

Purpose: This document provides detailed instructions for using the Workbench CLI from within a custom-built Docker container.

Use case and basic principles

At times, you may find it useful to use the Workbench CLI (command-line interface) from within a Docker container. This might be the case, for example, if you wanted to automate some aspect of workspace management programmatically using the CLI.

This document walks through the process of creating and using such a container image. The container will run on a Google Cloud node that is configured to use a Verily Workbench workspace service account. The Workbench CLI will be authenticated in the running container using that service account.

We give an example of using the container image for a dsub task configured to use a Workbench workspace service account, and takes as input a script of wb commands to run.

For the details of how to build and push a container image, see the instructions here, using either the Cloud Build or the docker instructions.

Prerequisites

These instructions assume that you have already installed the Workbench CLI, or are working in a cloud app where it has been installed, and that you have logged in and identified the workspace you want to work with as described in the Basic Usage Examples.

Step-by-step instructions

1. Create a Dockerfile

We'll first define a Dockerfile for the container image. Create a new subdirectory, and write the Dockerfile shown below to that directory.

Filename:Dockerfile

FROM ubuntu:20.04

ARG DEBIAN_FRONTEND=noninteractive

ENV TZ=Etc/UTC

RUN apt-get update -y

RUN apt-get install -y wget zip tzdata curl jq

# install python

RUN apt-get update && apt-get install -y \

python3 \

python3-pip

RUN python3 -m pip --no-cache-dir install --upgrade \

pip \

setuptools

# install gcloud sdk

RUN wget -nv https://dl.google.com/dl/cloudsdk/release/google-cloud-sdk.zip && \

unzip -qq google-cloud-sdk.zip -d tools && \

rm google-cloud-sdk.zip && \

tools/google-cloud-sdk/install.sh --usage-reporting=false \

--path-update=false --bash-completion=false \

--disable-installation-options && \

tools/google-cloud-sdk/bin/gcloud -q components update \

gcloud core gsutil && \

tools/google-cloud-sdk/bin/gcloud config set component_manager/disable_update_check true

# Java install

RUN apt-get install -y openjdk-17-jre

# wb-cli install

RUN curl -L https://storage.googleapis.com/bkt-workbench-artifacts/download-install.sh | bash

RUN mkdir -p /bin

RUN mv wb /bin

ENV PATH $PATH:/bin

ENV PATH $PATH:/tools/google-cloud-sdk/bin

# Set browser manual login

RUN wb config set browser MANUAL

# A script that runs in this container must first auth with app-default-credentials before

# running any other wb cli commands:

# wb auth login --mode=APP_DEFAULT_CREDENTIALSThe definition is straightforward: Starting from an Ubuntu base image, we’ll install some utilities, Python, gcloud, Java, and the Workbench CLI. Then, we'll run wb config set browser MANUAL, which sets up wb auth to work in the container context.

In this example, we're not adding an ENTRYPOINT or CMD to the container definition, as we'll instead pass dsub a script to run in the container. However, if you're using the container image in a different

context, you could add what fits your use case, as well as do any additional installations you need.

2. Build and push the container image

Follow the instructions here to build and push your container image to a Verily Workbench workspace Artifact Registry repository. You can do this from either your local machine or from a Workbench notebook app.

Note the URI for the container image; you'll use it later on when configuring dsub.

3. Optional: Create a storage bucket

Next, if you don't already have a controlled resource bucket in your worksworkspacepace, create one now.

This notebook, when run in a workspace notebook app, sets up some workspace resources for you, including creating a "temporary" storage bucket resource that autodeletes its contents after two weeks. You can also create workspace buckets via the UI, as described here.

4. Create a script for dsub to run in the container

Next, we'll create a small example script for dsub to run in the container.

The first line is always required; it authenticates the wb install. As noted above, this line assumes that the VM in which the container is running will be configured to use a Workbench service

account.

The rest of the script can be what you like. This example sets a workspace to use, and then lists the resources in that workspace. We'll set the workspace ID as an environment variable in the dsub call, shown below.

(For this example script, the workspace must already exist. You could alternately define a script to create a new workspace, then use it.)

Filename:test-script.sh

wb auth login --mode=APP_DEFAULT_CREDENTIALS

wb workspace set --id=${WORKSPACE_ID}

wb resource list

# add additional commands... e.g., create a new resourceSave your script to a file (e.g., test-script.sh).

5. Use dsub to run some wb commands

Now we are set up to run a dsub task. dsub is already installed in Workbench notebook

environments, or you can install it

locally if you like. The dsub command needs specification of a logging bucket. Use a workspace-controlled Cloud Storage bucket as described in Step 3 above.

If you ran the workspace_setup notebook to create a bucket that autodeletes old content, you may want to use that for the logging bucket.

For the dsub command-line arguments, it's necessary to specify info about your workspace project ID, the service account associated with your workspace, Cloud Storage bucket URI(s) to use for the logging directory and the input script, and the URI for the Docker container you pushed to the workspace's Artifact Registry.

We're also passing the workspace ID as an environment variable, to use it in the script.

Run the dsub command in a notebook app

If you're running dsub in a workspace notebook app, environment variables will be set for

your project ID and workspace service account. Edit the following command with the rest of your details:

dsub --project $GOOGLE_CLOUD_PROJECT --provider google-cls-v2 --logging gs://<YOUR_BUCKET>/logs \

--env WORKSPACE_ID=<YOUR_WORKSPACE_ID> \

--service-account $GOOGLE_SERVICE_ACCOUNT_EMAIL \

--network network --subnetwork subnetwork \

--image us-central1-docker.pkg.dev/${GOOGLE_CLOUD_PROJECT}/<YOUR_REPO_NAME>/<CONTAINER_NAME>:<TAG> \

--script test-script.sh --wait

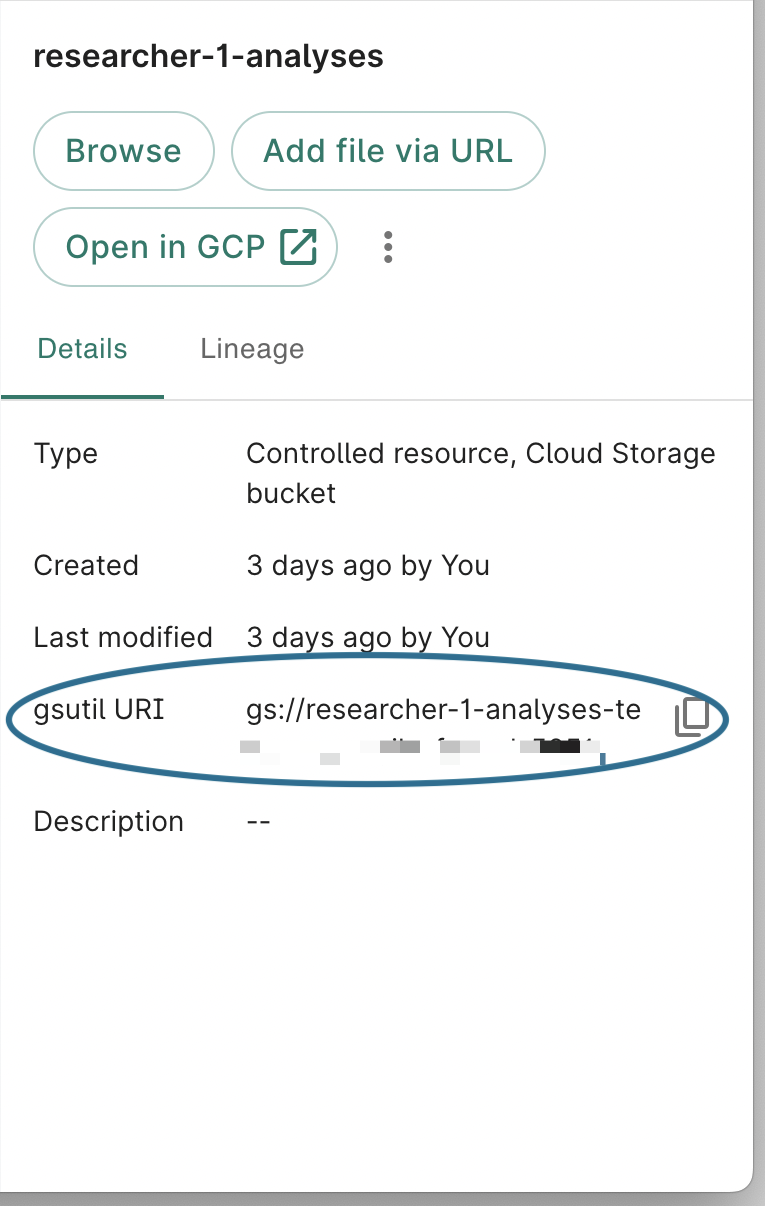

You can get the Cloud Storage URI of a Cloud Storage resource via wb resource resolve --id <resource_id>, or from the "details" panel for that resource in the Workbench UI.

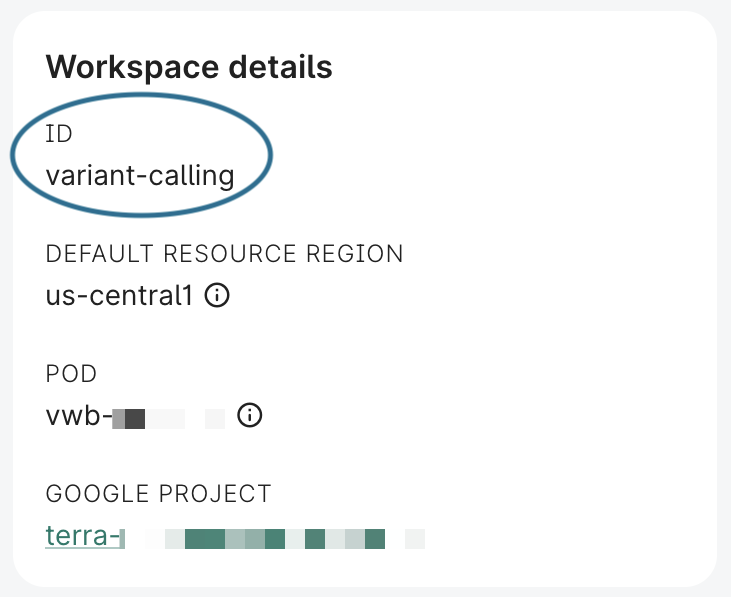

You can find the workspace ID in the Overview panel in the UI, or via wb status or wb workspace describe.

Run the dsub command on your local machine

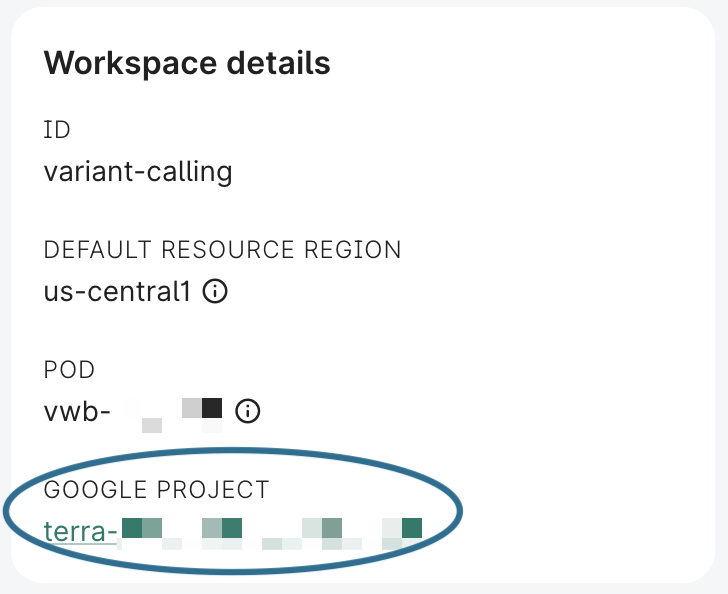

Alternatively, if you're running the command on your local machine, you will also need to specify your workspace's project ID and service account. You can find the project ID in the Workbench UI, in the "Overview" panel for your workspace.

Make sure first that Workbench is set to the correct workspace via wb status. Then, you can find your workspace's service account address by running the following command:

wb utility execute env | grep GOOGLE_SERVICE_ACCOUNT_EMAIL

Then edit the following with your details:

dsub --project <YOUR_PROJECT_ID> --provider google-cls-v2 --logging gs://<YOUR_BUCKET>/logs \

--env WORKSPACE_ID=<YOUR_WORKSPACE_ID> \

--service-account <YOUR_WORKSPACE_SERVICE_ACCOUNT> \

--network network --subnetwork subnetwork \

--image us-central1-docker.pkg.dev/<YOUR_PROJECT_ID>/<YOUR_REPO_NAME>/<CONTAINER_NAME>:<TAG> \

--script test-script.sh --wait

Summary

This document showed how to build and use a container image that can run the Workbench CLI. We used dsub as an example since it provides a convenient way to set up a node using the workspace's service account, and run a script in the container.

However, the Workbench CLI container could be used in many other contexts. The Dockerfile could be edited to include other installs or include an ENTRYPOINT specification.

Last Modified: 11 December 2024